If you have spent any time researching monitoring tools, you have probably encountered two terms over and over: synthetic monitoring and real user monitoring (RUM). Both measure performance and availability, but they do it in fundamentally different ways. Choosing the wrong approach -- or ignoring one entirely -- can leave blind spots in your observability stack that cost you users and revenue.

This guide breaks down how each approach works, where each one excels, and how to decide which combination is right for your API or web application. If you want a deeper introduction to synthetic monitoring specifically, start with our guide on what synthetic monitoring is and how scripted tests keep APIs healthy.

What Is Synthetic Monitoring?

Synthetic monitoring uses automated, scripted tests that run at scheduled intervals from predefined locations. These tests simulate user actions -- sending HTTP requests, loading pages, completing multi-step transactions -- without any real user being involved. The monitoring service generates the traffic itself, so results are controlled, repeatable, and available 24 hours a day regardless of whether anyone is actually using your application.

Common synthetic tests include simple HTTP pings that check whether an endpoint returns a 200 status, browser-based scripts that navigate through a checkout flow, and multi-step API transactions that validate an entire business workflow from authentication to data retrieval.

Synthetic monitoring is proactive. It detects problems before real users encounter them because the tests run continuously on a fixed schedule. If your API starts returning 500 errors at 3 AM, a synthetic check will catch it within minutes even though no customer is online.

For a comprehensive list of tools in this space, see our guide to the best synthetic monitoring tools.

What Is Real User Monitoring (RUM)?

Real user monitoring, often abbreviated RUM, collects performance and experience data from actual users as they interact with your application. A lightweight JavaScript snippet or SDK embedded in your frontend captures metrics like page load time, time to interactive, largest contentful paint, first input delay, and cumulative layout shift for every real session.

RUM is passive. It does not generate any artificial traffic. Instead, it observes and records what is actually happening for your user base. This means RUM data reflects real-world conditions: the slow 3G connection a user has in rural Indonesia, the outdated browser someone is running on a corporate laptop, or the CDN cache miss that only affects users in a specific region.

The strength of RUM is that it shows you exactly what your users experience. The limitation is that it only works when users are present. If you have zero traffic between midnight and 6 AM, RUM has nothing to report during that window.

Key Differences: Synthetic Monitoring vs RUM

The following table summarizes the core differences across six important dimensions. Understanding these trade-offs is essential for building a monitoring strategy that actually works.

| Dimension | Synthetic Monitoring | Real User Monitoring (RUM) |

|---|---|---|

| Data source | Scripted bots and automated tests | Actual user sessions and interactions |

| Coverage | Predefined paths and locations only | Every user, device, browser, and location |

| When issues are detected | Proactively, before users are affected | Reactively, as users encounter the problem |

| Performance overhead | None on production (tests run externally) | Small overhead from the embedded SDK or snippet |

| Cost model | Per check or per test run (predictable) | Per session or page view (scales with traffic) |

| Complexity | Simple for basic checks; complex for multi-step scripts | Easy to install; complex to analyze large data volumes |

| Off-hours coverage | Full coverage regardless of traffic | No data when there is no traffic |

| Environment accuracy | Controlled and consistent, not representative | Highly representative of real conditions |

When to Use Synthetic Monitoring

Synthetic monitoring is the right choice when you need baseline availability checks that run around the clock, regardless of user traffic patterns. These are the scenarios where synthetic monitoring delivers the most value:

- Pre-launch validation -- You are about to deploy a new API or web application and need to verify that endpoints respond correctly from multiple regions before any real users arrive.

- SLA monitoring -- You have committed to 99.9% uptime for customers and need continuous proof that your service meets that target. Synthetic checks provide the consistent, unbiased data that SLA reports require.

- Off-hours coverage -- Your user base is concentrated in a single timezone, but your API serves global integrations. Synthetic monitors catch failures during your quiet hours when RUM would have no data.

- Regression detection in CI/CD -- Running synthetic API tests as part of your deployment pipeline catches performance regressions before they reach production.

- Third-party dependency monitoring -- You depend on external APIs (payment gateways, authentication providers, CDNs) and need to know immediately when they degrade, regardless of whether your users happen to be triggering those code paths.

For teams building APIs and microservices, endpoint-level synthetic monitoring is often the most practical starting point. Our endpoint monitoring guide covers this in detail.

When to Use Real User Monitoring

RUM is the right choice when you need to understand the actual experience your users are having, including the long tail of slow sessions that synthetic tests cannot replicate. These scenarios favor RUM:

- Frontend performance optimization -- You want to improve Core Web Vitals (LCP, FID, CLS) based on real data from real devices and networks, not from a controlled test environment.

- Geographic performance analysis -- You need to identify which regions have the worst user experience so you can optimize CDN configuration, add edge locations, or adjust routing rules.

- Device and browser segmentation -- Your application behaves differently on mobile versus desktop, or on Chrome versus Safari. RUM breaks down metrics by these dimensions automatically.

- Conversion funnel analysis -- You want to correlate performance metrics with business outcomes: do users on slow connections abandon checkout more often? RUM connects performance data to user behavior.

- High-traffic applications -- When you have millions of sessions per month, RUM provides statistical confidence that no synthetic test can match. Outliers, edge cases, and intermittent failures all surface naturally in the data.

When to Use Both

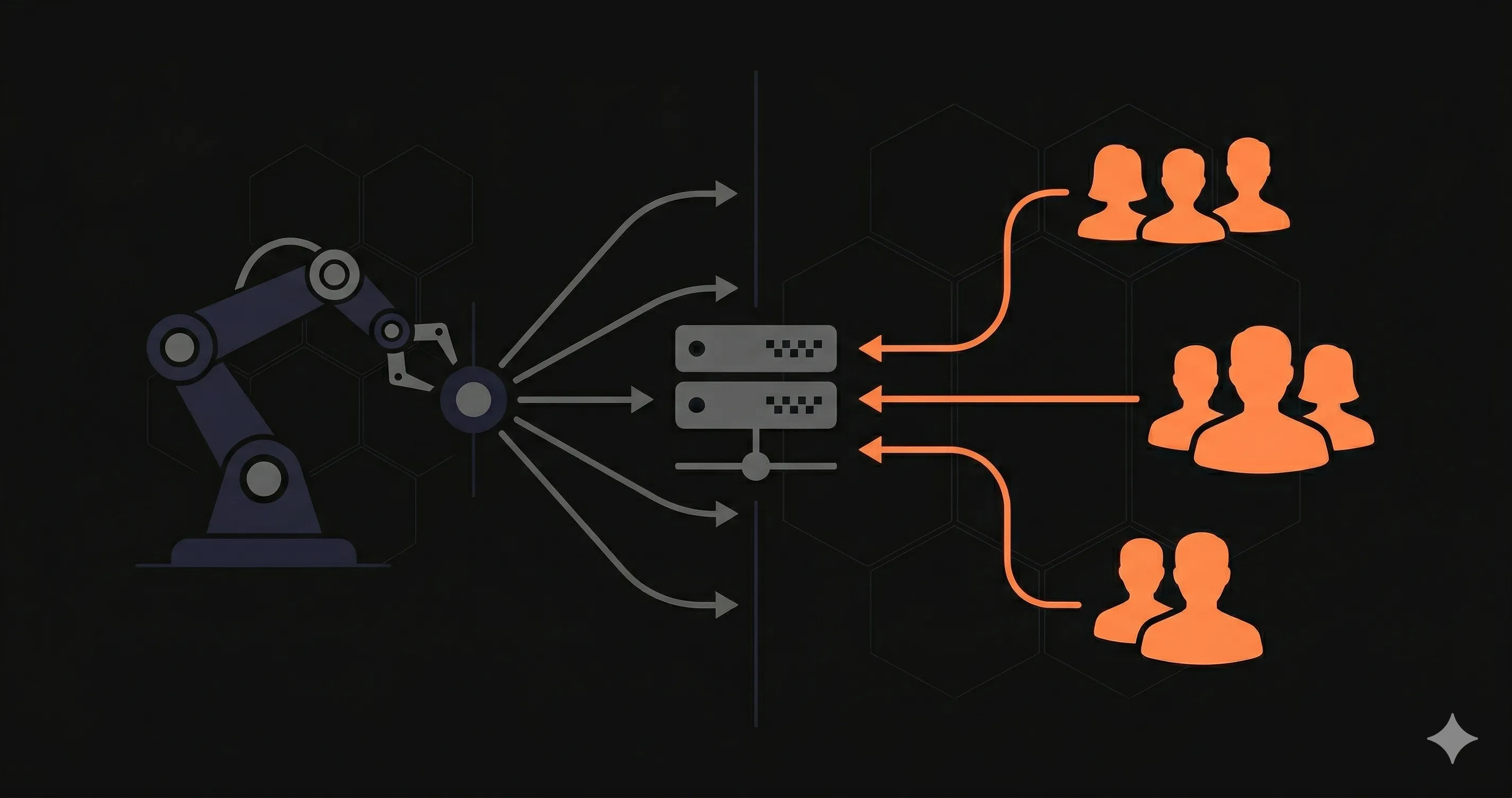

Most mature engineering teams use synthetic monitoring and RUM together because each approach fills the gaps left by the other. Here is how the combination works in practice:

Synthetic monitoring acts as your early warning system. It runs 24/7 from fixed locations with consistent network conditions, so you get clean baseline data and immediate alerts when something breaks. If your API starts returning errors at 3 AM, the synthetic check catches it within one check interval -- typically 1 to 5 minutes.

RUM provides the ground truth. Once users arrive, RUM shows you what they actually experience. A synthetic test from a datacenter in Virginia might report 200ms response times, but RUM reveals that users on mobile networks in Southeast Asia are seeing 4-second load times because of a misconfigured CDN edge.

The combined approach covers these critical blind spots:

- Synthetic monitoring covers off-hours when RUM has no data

- RUM covers the infinite variety of real-world conditions that synthetic tests cannot replicate

- Synthetic tests validate critical paths even when user traffic does not exercise them frequently

- RUM identifies performance issues that only manifest under real-world device, browser, and network combinations

How Nurbak Fits Into This Picture

Nurbak Watch provides scheduled health checks from 4 global regions (US, Brazil, France, Japan) that function as lightweight synthetic monitoring for APIs. Every check measures DNS resolution time, TLS handshake duration, time to first byte, full response time, and response size. You get multi-region performance baselines without configuring complex browser scripts or managing test infrastructure.

For API teams, this covers the most important use case: confirming that your endpoints are healthy and performant from the locations where your users and integrations live. Nurbak does not replace a full RUM solution for frontend performance analysis, but it does eliminate the most common blind spot -- not knowing that your API is down or slow until a user reports it.

If your primary concern is API availability and response time across regions, Nurbak gives you the synthetic monitoring layer without the complexity or cost of enterprise platforms. Pair it with a RUM tool on your frontend for complete coverage across both the API layer and the user experience layer.

For a broader look at tools available in this space, check our synthetic monitoring tools comparison and our endpoint monitoring guide.

Frequently Asked Questions

What is the main difference between synthetic monitoring and real user monitoring?

Synthetic monitoring uses scripted, automated tests that simulate user requests from predefined locations at regular intervals. Real user monitoring (RUM) collects performance data from actual users interacting with your application in real time. Synthetic monitoring is proactive and controlled; RUM is passive and reflects real-world conditions.

Can I use both synthetic monitoring and RUM at the same time?

Yes, and most mature engineering teams do. Synthetic monitoring catches outages and regressions before users notice them, while RUM reveals how real users experience your application across different devices, browsers, and network conditions. Together they provide complete observability.

Is real user monitoring more expensive than synthetic monitoring?

It depends on traffic volume. RUM is typically priced per session or per page view, so costs scale with your user base. Synthetic monitoring is priced per check or per test run, which is more predictable. For high-traffic applications, RUM can become significantly more expensive. For low-traffic APIs, synthetic monitoring may cost more per data point because you are paying for checks regardless of real traffic.

Does Nurbak offer synthetic monitoring or real user monitoring?

Nurbak Watch provides scheduled health checks from 4 global regions (US, Brazil, France, Japan) that function as lightweight synthetic monitoring for APIs. It measures DNS, TLS, TTFB, and full response time at regular intervals. While it is not a full browser-based synthetic tool or a RUM platform, it covers the most critical use case for API teams: knowing whether your endpoints are healthy and fast from multiple locations around the world.