Distributed tracing is one of those tools that sounds essential until you try to implement it. Then you realize it requires instrumenting every service, managing a storage backend, deploying collectors, and training your team to read trace waterfalls. For the right architecture, that investment pays off. For many teams, it is overkill.

This guide covers the five best distributed tracing tools in 2026, compares them honestly, and then asks the question most articles skip: do you actually need one?

What Distributed Tracing Actually Does

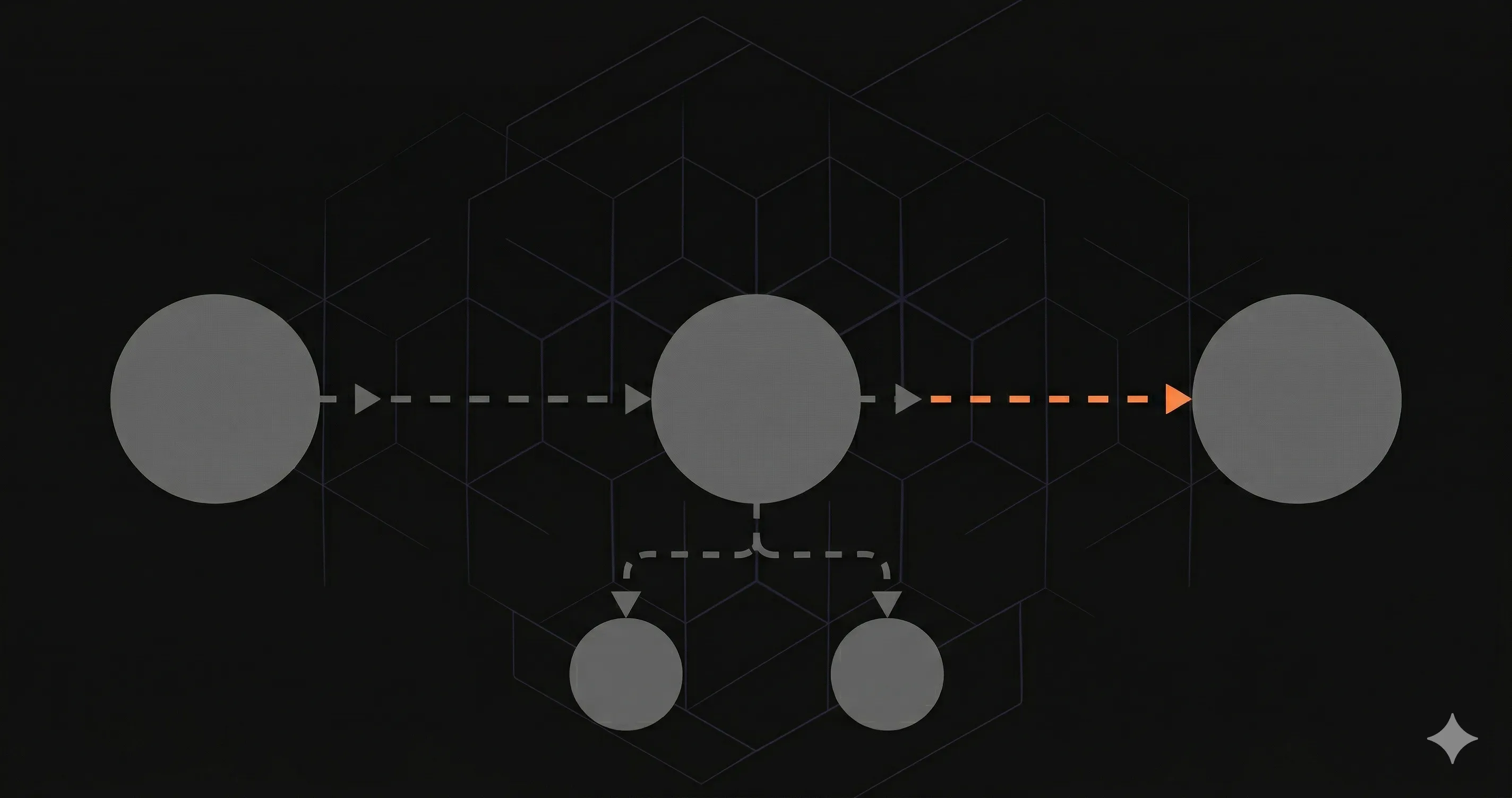

Distributed tracing follows a single request as it travels across multiple services. When a user hits your API gateway, and that request goes to an auth service, then a payment service, then a notification service — tracing shows you the entire chain.

Each service adds a "span" to the trace. A span records:

- Service name

- Operation name

- Start time and duration

- Status (success/error)

- Custom attributes (user ID, request ID, etc.)

The trace ID propagates through HTTP headers (usually traceparent in the W3C format), so every service can attach its span to the same trace. The result is a timeline — a "waterfall" — showing exactly where time was spent and where errors occurred.

1. Jaeger

Best for: Teams already invested in Kubernetes and the CNCF ecosystem.

Jaeger is the most widely deployed open-source tracing system. It was created by Uber, donated to the Cloud Native Computing Foundation (CNCF), and graduated in 2019 — meaning it passed rigorous production-readiness reviews.

Key characteristics:

- Storage backends: Elasticsearch, Cassandra, Kafka, Badger (local), or gRPC plugin for custom backends.

- Sampling: Supports head-based and tail-based sampling, plus adaptive sampling that adjusts rates based on traffic volume.

- OpenTelemetry native: Since v1.35, Jaeger receives traces directly via OTLP (OpenTelemetry Protocol), making the Jaeger agent optional.

- UI: Built-in web interface for searching and viewing traces. Functional but not beautiful.

- Deployment: Kubernetes-native. Helm charts, operators, and sidecar patterns are well documented.

Pricing: Free and open-source. You pay for infrastructure (Elasticsearch cluster, Kubernetes resources).

Downsides: Operational overhead is significant. Running Elasticsearch or Cassandra at scale requires dedicated DevOps effort. The UI lacks the query power of commercial tools like Honeycomb.

2. Zipkin

Best for: Smaller teams that want tracing without the complexity of Jaeger.

Zipkin was one of the original distributed tracing systems, created by Twitter in 2012 (inspired by Google's Dapper paper). It is simpler than Jaeger by design.

- Storage: In-memory, MySQL, Cassandra, Elasticsearch.

- Architecture: Single binary deployment possible. No separate collectors or agents required.

- Language support: Instrumentation libraries (called "Brave") for Java, Python, Ruby, JavaScript, Go, and more.

- UI: Clean, simple interface. Shows traces and dependency graphs.

Pricing: Free and open-source.

Downsides: Fewer sampling strategies than Jaeger. The community is smaller and less active. Not a CNCF project, so long-term governance is less structured.

3. Grafana Tempo

Best for: Teams already using Grafana for dashboards and Loki for logs.

Tempo is Grafana Labs' tracing backend, and its architecture is genuinely clever. Instead of indexing traces (which is expensive), Tempo stores them in object storage (S3, GCS, Azure Blob) and relies on trace IDs for lookup.

- Storage: Object storage only. No Elasticsearch or Cassandra needed. This makes it dramatically cheaper to run at scale.

- Search: TraceQL query language for searching by attributes, duration, and status. You can also find traces through Loki logs or Grafana metrics (exemplars).

- Integration: Deep integration with Grafana, Loki (logs), and Mimir (metrics). The "Traces to Logs" and "Traces to Metrics" links make correlation easy.

- OpenTelemetry: Accepts OTLP, Jaeger, and Zipkin formats natively.

Pricing: Free (self-hosted) or Grafana Cloud (free tier with 50GB/month traces).

Downsides: Search is limited compared to Jaeger or Honeycomb unless you use TraceQL extensively. Best experience requires the full Grafana stack (Tempo + Loki + Mimir), which is its own ecosystem to manage.

4. Datadog APM

Best for: Teams that want everything in one platform and have the budget for it.

Datadog APM is not just tracing — it is a full application performance monitoring suite with distributed tracing as one feature. It connects traces to logs, metrics, infrastructure, and error tracking in a single pane.

- Automatic instrumentation: Datadog's agent auto-instruments most popular frameworks and libraries. Less manual work than open-source tools.

- Live search: All traces are indexed and searchable (for 15 minutes at full resolution, then sampled). This is expensive but powerful.

- Service map: Automatically generates a dependency graph of your services based on trace data.

- Error tracking: Groups similar errors, tracks regressions, assigns to teams.

Pricing: $31/host/month for APM, plus $0.10 per million indexed spans (after 1M included). A team with 20 hosts and moderate traffic can expect $1,000-3,000/month for APM alone.

Downsides: Cost. Datadog's pricing is notoriously unpredictable. Indexed spans, custom metrics, and log ingestion all have separate charges that add up. Vendor lock-in is also significant — migrating away from Datadog is a multi-month project.

5. Honeycomb

Best for: Teams that need to debug complex, high-cardinality issues in distributed systems.

Honeycomb approaches tracing differently. Instead of storing traces in a traditional database, it treats every span as an event in a high-cardinality columnar store. This means you can query across any combination of attributes without pre-defining indexes.

- BubbleUp: Automatically identifies which attributes differ between slow and fast requests. Instead of guessing, the tool shows you the root cause.

- High cardinality: Query by user ID, request ID, shopping cart contents, feature flag — any attribute, at any cardinality, without performance degradation.

- SLOs: Built-in SLO tracking tied to trace data. Define your error budget and Honeycomb shows you burn rate in real time.

- OpenTelemetry: First-class OTLP support. Honeycomb was an early advocate of OpenTelemetry.

Pricing: Free tier (20M events/month). Pro at $130/month for 100M events. Enterprise pricing is custom.

Downsides: Not an all-in-one platform. If you also need infrastructure monitoring, log management, and synthetics, you will need additional tools. The learning curve for effective querying is steeper than Datadog's point-and-click interface.

Comparison Table

| Tool | Type | Storage | Best For | Starting Price |

|---|---|---|---|---|

| Jaeger | Open-source | ES, Cassandra, Kafka | K8s-native teams | Free (infra costs) |

| Zipkin | Open-source | MySQL, ES, Cassandra | Simple setups | Free (infra costs) |

| Grafana Tempo | Open-source | Object storage (S3/GCS) | Grafana stack users | Free (Grafana Cloud free tier) |

| Datadog APM | SaaS | Managed | All-in-one teams | $31/host/month |

| Honeycomb | SaaS | Managed | Debugging complex systems | Free (20M events) |

When You Don't Need Distributed Tracing

Here is the part that tracing vendors do not want you to read. Distributed tracing solves a specific problem: understanding request flow across multiple services. If you do not have multiple services, you do not have that problem.

You probably don't need tracing if:

- You run a monolith. A single Next.js application, a single Rails app, a single Django project. There is no "distributed" in your architecture. Traces with one span are just... logs.

- Your team has fewer than 5 engineers. The operational overhead of running and using a tracing system outweighs the debugging benefits when everyone already knows the codebase.

- You deploy on Vercel (or similar PaaS). Serverless deployments on Vercel, Netlify, or Railway do not have the inter-service communication patterns that tracing excels at visualizing.

- You have fewer than 5 services. With 2-3 services, structured logging with a correlation ID gives you 80% of the value at 10% of the complexity.

- Your budget is under $500/month for observability. Commercial tracing tools eat through budgets fast. Open-source tools eat through DevOps time. Either way, it is expensive.

What you need instead is monitoring. Not tracing. Monitoring.

Monitoring answers: "Is this endpoint healthy? How fast is it? Is the error rate increasing?" Tracing answers: "Why was this specific request slow, and which service caused it?" Most teams need the first answer long before they need the second.

The Monitoring Alternative for Smaller Architectures

If you are running a Next.js application — whether on Vercel, AWS, or a VPS — what you actually need is per-endpoint visibility: response times, error rates, status codes, and alerts when things break.

Nurbak Watch is built for exactly this scenario. It monitors your Next.js API routes from inside the server using the instrumentation.ts hook. Five lines of code, $29/month flat (free during beta), and alerts via Slack, email, or WhatsApp in under 10 seconds.

// instrumentation.ts

import { initWatch } from '@nurbak/watch'

export function register() {

initWatch({

apiKey: process.env.NURBAK_WATCH_KEY,

})

}No collectors to deploy. No storage backends to manage. No sampling strategies to configure. If your architecture is a Next.js app with API routes, this gives you the visibility that matters without the complexity that does not.

Save distributed tracing for when you actually have a distributed system.