Your API gateway sits in front of every request. It handles authentication, rate limiting, routing, and sometimes caching. It's the single point that every API call passes through.

Which makes it the single point where things can go wrong without anyone noticing.

A misconfigured rate limit silently throttles 15% of legitimate traffic. A cache invalidation bug serves stale data for hours. An integration timeout cascades into 504s that your backend logs don't capture because the request never reached your server.

This guide covers what to monitor on your API gateway, specific setups for AWS API Gateway and Kong, and the critical gap that gateway-level monitoring leaves at the application layer.

API Gateway Metrics That Actually Matter

Most gateways expose dozens of metrics. These are the five that prevent outages:

1. Request Latency (Total vs Integration)

Total latency is the full round-trip time from when the gateway receives the request to when it sends the response. Integration latency is just the time your backend takes to process it. The difference is gateway overhead — auth checks, request transformation, logging.

// What the numbers tell you:

// Total latency: 450ms

// Integration latency: 120ms

// Gateway overhead: 330ms ← Something is wrong at the gateway level

// Healthy ratio: gateway overhead should be < 20% of total latency

// If overhead is > 50%, check: auth middleware, request/response transforms,

// logging plugins, WAF rulesIf integration latency is low but total latency is high, the problem is in the gateway — not your backend. This distinction saves hours of debugging in the wrong place.

2. Error Rates — 4xx vs 5xx

Split these into client errors and server errors, because the response is completely different:

- 4xx spike — Usually means a client-side change: broken frontend deployment, expired API keys, changed request format. Check if a deployment happened recently.

- 5xx spike — The gateway or backend is failing. Check integration timeouts, backend health, and gateway configuration changes.

- 429 (Too Many Requests) — Rate limiting kicked in. Could be legitimate protection or misconfigured limits throttling real users.

- 502/504 — Backend is unreachable or too slow. The gateway timed out waiting for your server.

A global 5xx rate of 0.5% is meaningless if all errors come from one route. Always monitor errors per route, not just globally.

3. Throttling Rate

How many requests are being rejected by rate limits. Some throttling is intentional (protecting your backend from abuse). Too much throttling means legitimate users are being blocked:

// Healthy: 0.1% of requests throttled (bots, scrapers)

// Warning: 2-5% throttled — check if limits are too aggressive

// Critical: 10%+ throttled — you're losing real users

// Common mistake: setting per-IP limits that hit users behind

// corporate NATs (thousands of employees, one external IP)4. Cache Hit Rate

If your gateway caches responses, the hit rate tells you if caching is actually working:

- Above 80%: Caching is effective. Your backend handles 5x less traffic than raw request count.

- 50-80%: Decent, but check TTL settings and cache key configuration.

- Below 50%: Something is wrong. Either cache keys are too specific (every request is unique) or TTLs are too short.

- Sudden drop: Cache was invalidated or the cache layer went down. Backend is getting hammered with full traffic.

5. Request Count by Route and Method

Traffic distribution tells you where to focus optimization and where to set tighter limits:

// Typical API traffic distribution:

// GET /api/products → 45% of traffic (cacheable, optimize here first)

// GET /api/users/me → 22% of traffic (auth-dependent, watch latency)

// POST /api/events → 18% of traffic (write-heavy, watch error rate)

// POST /api/checkout → 3% of traffic (low volume, highest value)

// Other → 12% of traffic

// The endpoint with 3% traffic might generate 80% of your revenue.

// Monitor it like it's your most important route — because it is.Monitoring AWS API Gateway

AWS API Gateway publishes metrics to CloudWatch automatically. Here's how to set up meaningful monitoring:

Enable Detailed Metrics

By default, AWS API Gateway only publishes aggregate metrics. To get per-route breakdowns, enable detailed metrics on your stage:

# AWS CLI — enable detailed metrics

aws apigateway update-stage \

--rest-api-id your-api-id \

--stage-name prod \

--patch-operations \

op=replace,path=/~1*/metrics/enabled,value=trueOr in your CloudFormation / CDK template:

# CloudFormation

Resources:

ApiStage:

Type: AWS::ApiGateway::Stage

Properties:

StageName: prod

RestApiId: !Ref MyApi

MethodSettings:

- HttpMethod: "*"

ResourcePath: "/*"

MetricsEnabled: true

DataTraceEnabled: false # Don't log request/response bodies

ThrottlingBurstLimit: 500

ThrottlingRateLimit: 1000Key CloudWatch Metrics

| Metric | What it measures | Alert when |

|---|---|---|

Count | Total API requests | Drop > 30% from baseline (traffic loss) |

Latency | Full request-response time | P95 > 2x baseline |

IntegrationLatency | Backend processing time only | P95 > 1 second |

4XXError | Client error count | Rate > 5% sustained |

5XXError | Server error count | Any sustained > 0.1% |

CacheHitCount | Responses served from cache | Hit rate drops below 50% |

CloudWatch Alarm Example

# Alert when 5xx error rate exceeds 1% for 5 minutes

aws cloudwatch put-metric-alarm \

--alarm-name "api-gateway-5xx-rate" \

--namespace "AWS/ApiGateway" \

--metric-name "5XXError" \

--dimensions Name=ApiName,Value=MyAPI Name=Stage,Value=prod \

--statistic Sum \

--period 300 \

--threshold 50 \

--comparison-operator GreaterThanThreshold \

--evaluation-periods 1 \

--alarm-actions arn:aws:sns:us-east-1:123456789:alertsMonitoring Kong Gateway

Kong exposes metrics through plugins. The Prometheus plugin is the standard approach for production monitoring:

Enable the Prometheus Plugin

# Enable globally (all services and routes)

curl -X POST http://localhost:8001/plugins \

--data "name=prometheus" \

--data "config.per_consumer=false" \

--data "config.status_code_metrics=true" \

--data "config.latency_metrics=true" \

--data "config.bandwidth_metrics=true"Or declaratively in kong.yml:

# kong.yml

plugins:

- name: prometheus

config:

per_consumer: false

status_code_metrics: true

latency_metrics: true

bandwidth_metrics: trueKey Prometheus Metrics

Once enabled, Kong exposes metrics at :8001/metrics:

# Request count per service, route, and status code

kong_http_requests_total{service="users-api",route="get-users",code="200"} 48293

kong_http_requests_total{service="users-api",route="get-users",code="500"} 12

# Latency histograms (request, kong processing, upstream)

kong_request_latency_ms_bucket{service="users-api",le="100"} 45000

kong_request_latency_ms_bucket{service="users-api",le="500"} 47800

kong_request_latency_ms_bucket{service="users-api",le="1000"} 48200

kong_upstream_latency_ms_bucket{service="users-api",le="100"} 47500

kong_upstream_latency_ms_bucket{service="users-api",le="500"} 48100

# Kong processing overhead

kong_kong_latency_ms_bucket{service="users-api",le="10"} 48000

kong_kong_latency_ms_bucket{service="users-api",le="50"} 48290Grafana Dashboard Queries

# Error rate per service (PromQL)

sum(rate(kong_http_requests_total{code=~"5.."}[5m])) by (service)

/

sum(rate(kong_http_requests_total[5m])) by (service)

# P95 latency per route

histogram_quantile(0.95,

sum(rate(kong_request_latency_ms_bucket[5m])) by (le, route)

)

# Throughput per route (requests per second)

sum(rate(kong_http_requests_total[5m])) by (route)The Gap: What Gateways Don't Monitor

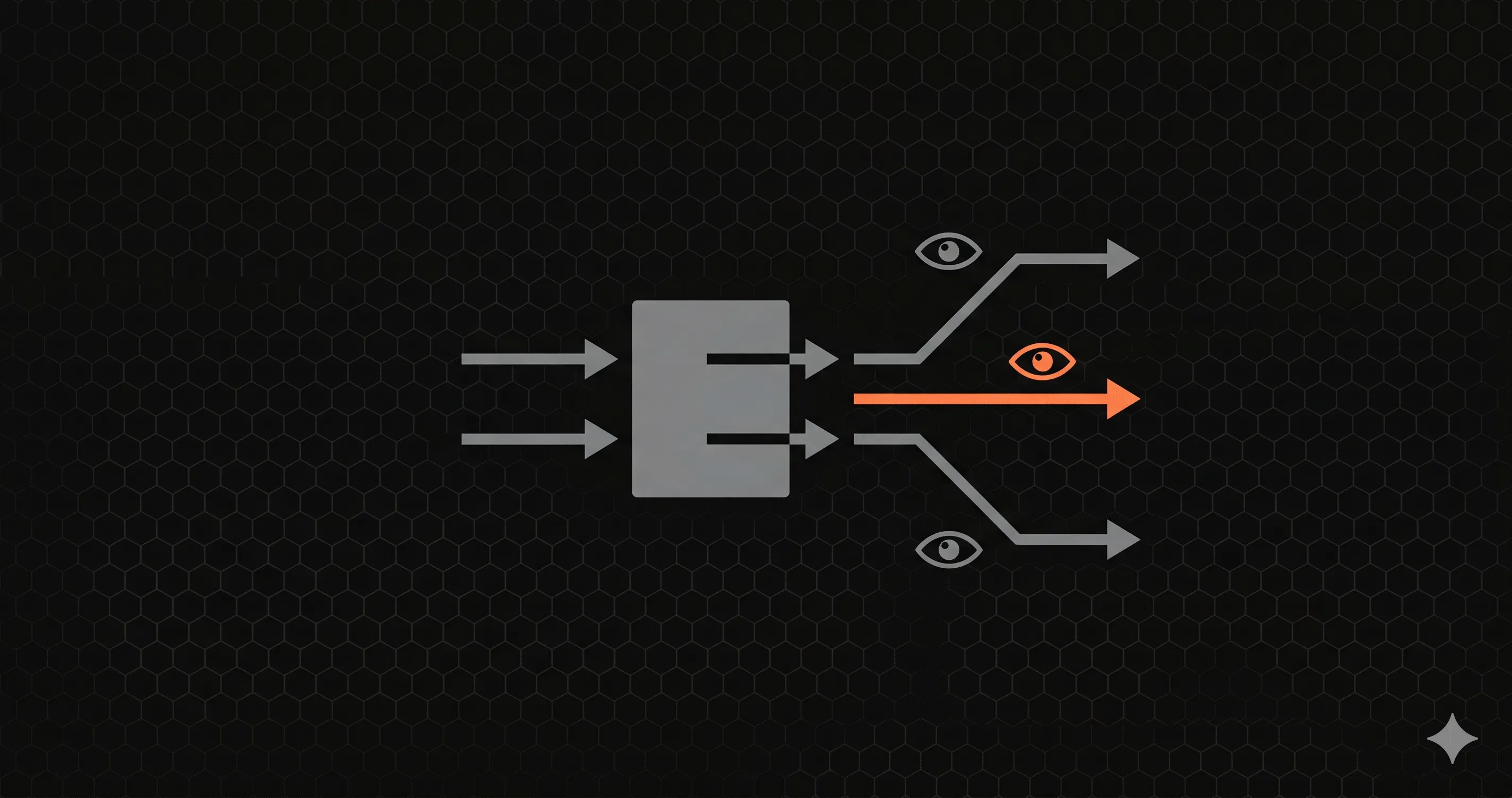

Gateway metrics tell you what happened at the network edge. They don't tell you what happened inside your application. This creates a significant blind spot:

| What gateways see | What gateways miss |

|---|---|

| Total request latency | Where inside the app the time was spent (DB, cache, computation) |

| HTTP status codes | Business logic errors returned as 200 with error payloads |

| Request count per route | Which database queries each route runs and how slow they are |

| Gateway-level throttling | Application-level concurrency issues (connection pool exhaustion) |

| Upstream health (binary: up/down) | Upstream degradation (responding but slow, partial failures) |

Real scenario: Your Kong dashboard shows GET /api/products at 450ms P95. Is that good or bad? You don't know, because you can't see that 400ms of that is a single N+1 database query that used to take 40ms before someone added a .populate() call last Tuesday.

Gateway monitoring tells you the symptom. Application-level monitoring tells you the cause.

Filling the Gap with Application-Level Monitoring

For Next.js applications behind an API gateway, Nurbak Watch provides the application-level visibility that gateways can't:

// instrumentation.ts — runs inside your Next.js server

import { initWatch } from '@nurbak/watch'

export function register() {

initWatch({

apiKey: process.env.NURBAK_WATCH_KEY,

})

}This runs inside your server process — downstream of the gateway. It sees every request after auth, after rate limiting, after caching. What it adds to your monitoring:

- Server-side latency per route — How long your code takes, excluding gateway overhead. When gateway latency is 450ms but server latency is 40ms, you know the problem is at the gateway layer.

- Real error rates — Including errors your gateway doesn't see, like 200 responses with error payloads, or exceptions caught and returned as degraded responses.

- Cold start tracking — On Vercel, every cold start looks like a slow request to the gateway. Nurbak Watch tracks cold start frequency and duration separately.

- Instant alerts — Slack, email, or WhatsApp in under 10 seconds. Gateway alerts typically go through CloudWatch or Prometheus alerting pipelines with 1-5 minute delays.

Recommended Stack

| Layer | Tool | What it monitors |

|---|---|---|

| Gateway | CloudWatch (AWS) or Prometheus (Kong) | Traffic shape, throttling, cache, gateway errors |

| Application | Nurbak Watch | Server-side latency, error rates, cold starts, per-route metrics |

| Uptime | External ping (UptimeRobot, free) | Total outage detection from outside |

Three layers, three perspectives, full coverage. The gateway watches the front door. Nurbak Watch watches every room. The uptime ping confirms the building is still standing.

Get Started — Free During Beta

If you're running Next.js behind an API gateway and want application-level visibility without deploying another agent:

- Go to nurbak.com and create an account

- Run

npm install @nurbak/watch - Add 5 lines to

instrumentation.ts - Deploy

Nurbak Watch is in beta and free during launch. It complements your gateway monitoring with the application-level metrics you're currently blind to.